We often hear that we live in a digital world — but what does this mean, exactly? It means a lot more than just dependence on smartphones and computers. It is a comment on how we codify and process the record of our society’s activities.

A century ago, the world was an analog place. Information and data were stored on paper. If you wanted to transmit information, you would have to physically send a message to its destination. The digital age relieves us of these constraints by helping translate any communicable information into a shared language, capable of being transmitted across electric signals and electromagnetic waves at light speeds.

All global economic activity and coordination are entirely dependent on the computer networks responsible for carrying our information. So much so that organizations like the United Nations are claiming that access to the Internet should now be considered a basic human right.

While most of the world already treats Internet access as a utility, without which most economic activity would come to a halt, one third of all people still remain entirely disconnected.

Furthermore, the nature of the Internet as a common substrate connecting users on an equal footing is now totally different. Instead, the Internet looks more like a number of private network silos connected by legacy wires and transport protocols.

If the Internet is indeed a utility, networked computing is also one of the only utilities where access is entirely mediated by private companies.

Not only does this raise significant questions regarding the future of accessibility, it also raises concerns about how the Internet’s central role in society should be maintained and safeguarded for the future.

While the past fifty years have been dedicated to solving the technical problems of digital networking, the next fifty will likely be devoted to the many emerging social problems of computer networking.

The Current Status of Connectivity

The sheer scale of activity on digital networks today is unfathomably large. According to the latest estimates, 328 million terabytes of data are created each day. There are billions of smartphone devices sold every year, and though it’s difficult to estimate the total number of individually connected devices, some estimates put this number between 20 and 50 billion devices.

This thing called the Internet, in other words, is in a whole universe of its own. “The Internet is no longer tracking the population of humans and the level of human use. The growth of the Internet is no longer bounded by human population growth, nor the number of hours in the day when humans are awake,” writes Geoff Huston, chief scientist at APNIC.

The infrastructure that makes the scale of such communications possible is similarly astounding. This is made up of a massive global web of physical hardware, consisting of over 5 billion kilometers of fiber optic cable, over 574 active and planned submarine cables spanning over 1 million kilometers in length, and now a constellation of over 5,400 satellites offering connectivity from low earth orbit.

The Internet is a dynamically evolving technology. It is so large and complex that it cannot be controlled or even wholly understood from a singular, central point. It is this quality that has contributed to the Internet’s resilience and success in scaling from a user base of merely a few hundred computers in the 1980s to billions today. However, it is also the absence of a designated steward for the internet that poses challenges for its continued maintenance.

Given the Internet’s absolutely central role in economics, our daily communications, and our access to information, it’s important to understand the principles and realities upon which it operates today.

But before we trace the evolution of the Internet, and the many shapes it took on over its fifty-year history, let us first examine the singular theory that birthed digital communications as such: Claude Shannon’s information theory.

The Theory of Information

In the analog era, every kind of data had a designated medium. Text was transmitted via paper. Images were transmitted via canvas or photographs. Speech was communicated via sound waves.

One of the greatest breakthroughs of the analog era was the invention of the telephone by Alexander Graham Bell in 1876. It enabled speech to travel beyond direct physical proximity. Sound waves on one end of the phone line were converted into electrical frequencies carried through a wire, whereupon those frequencies were reproduced at the other end.

But although funneling sound waves through a copper wire, rather than the atmosphere, extended the range of conversations, this system still suffered from all the same drawbacks of communicating through sound waves. Often, it was an unreliable process. Just as background noise makes it harder to hear someone speak, interference in the transfer line would introduce noise and scramble the message coming across the wire. Once noise was introduced, there was no real way to remove it and restore the original message. Even repeaters, which amplified the signals, also had the adverse effect of amplifying the noise. This meant that over enough of a distance, the original message could become incomprehensible.

Still, the phone companies tried to make it work. The first transcontinental line was established in 1914, connecting customers between San Francisco and New York. It took 3,400 miles of wire hung from 130,000 poles to build.

In those days, the biggest telephone provider was the American Telephone and Telegraph Company (AT&T), which had absorbed the Bell Telephone Company in 1899. As long-distance communications exploded across the United States, an internal research department called ‘Bell Labs’, consisting of electrical engineers and mathematicians, was thinking deeply about how to continue expanding the network’s capacity. One of these engineers was Claude Shannon.

In 1941, Shannon arrived at Bell Labs from MIT, where the ideas behind the computer revolution were in their infancy. He studied under Norbert Wiener, the father of cybernetics, and worked on Vannevar Bush’s Differential Analyzer, a kind of mechanical computer capable of resolving differential equations.

Source: Computer History Museum

It was his experience with the Differential Analyzer, a machine that used arbitrarily designed circuits to produce specific calculations, that Shannon developed the idea for his Master’s thesis. In 1937, he submitted “A Symbolic Analysis of Relay and Switching Circuits.” It was a breakthrough paper, which pointed out that boolean algebra could be represented physically in electrical circuits. The beautiful thing about these boolean operands is that they require only two inputs, on or off.

It was an elegant way of standardizing the design of computer logic. And, if the computer’s operations could be standardized, perhaps the inputs the computer operated on could be standardized too.

Shannon began working at Bell Labs during the Second World War, mainly on cryptography. And it was this experience that led him to make his greatest contribution yet.

At the time, there was no clear definition of information. It was a synonym for meaning or significance, but on the whole, its essence was ephemeral. As Shannon studied the structures of messages and language systems, he realized there is a mathematical structure underlying information. It meant that in fact, information could be quantified. However, to do so, it would need a unit of measurement.

Shannon was the one to coin the term ‘bit’ to represent a quantum of information — the smallest singular amount of it. This framework translated perfectly to the electronic signals in a digital computer, which could only be in one of two states — on or off. Shannon published these insights in his “A Mathematical Theory of Communication” just one year after the invention of the transistor by his colleagues at Bell Labs.

However, the paper didn’t simply discuss information encoding, it also created a mathematical framework to categorize the communication process as such. For instance, Shannon noted that all information traveling from a sender to a recipient must pass through a channel, whether that channel be a wire or the atmosphere.

Shannon’s stunning insight was that every channel has a threshold, or maximum amount of information that can be delivered reliably to a sender. This implied that as long as the quantity of information carried through the channel fell below the threshold, it could be delivered to the sender intact, even if a ton of noise scrambled some of the message during transmission. He proved, mathematically, that any message could be error-corrected into its original state if it traveled through a large enough channel.

The enormity of this revolution is difficult to communicate today, mainly because we’re swimming in its consequences. However, the implication of Shannon’s theory was that everything from text to images, films, and even genetic material could be translated into his informational language of bits. Essentially, it laid out the rules by which machines could talk to one another — about anything.

At the time that Shannon developed his theory, computers could not yet communicate with one another. If you wanted to transfer information from one computer to the other, you would have to literally walk over to the other computer and manually input the data yourself. However, making talking machines a reality was not far off now. After all, Shannon had just written the handbook for how to start building it.

The Dawn of the Internet

The only real, interconnected network by the mid-20th century was the telephone system. AT&T was the largest telephone network at the time. It had a monstrous continental web, with hanging copper wires criss-crossing across the continent.

The main way the telephone network worked was through circuit switching. Every pair of callers would get a dedicated “line” for the duration of their conversation. When it ended, an operator would reassign that line to connect other callers, and so on.

At the time, it was possible to get computers “on the network” by converting their digital signals into analog signals and sending those through the telephone lines, but reserving an entire line for one computer-to-computer interaction was hugely wasteful. The connection would take fractions of a second to go through, and by the time it would take to switch the circuit, hundreds of packages could have been sent through that line.

Leonard Kleinrock, a student of Shannon’s at MIT, was interested in what the design of a digital communications network might look like. One that could transmit digital bits instead of analog sound waves.

His solution, which he wrote up as his graduate dissertation, was a packet-switching system, which involved breaking up digital messages into a series of smaller pieces called packets. Packet-switching was a design that allowed for resource sharing among connected computers. Rather than having one computer’s long communiqué take up a whole line, that line could instead be shared among a number of users’ packets. It was a design that allowed for more messages to get to their destinations, more efficiently.

There would need to be a mechanism in the network that would be responsible for granting access to different packets very quickly. To prevent bottlenecks, this mechanism would also need to calculate the most efficient, opportunistic path for each packet to take to its destination. He also showed that this mechanism couldn’t be a central point in the system, it would need to be a distributed mechanism that worked at each node in the network.

Kleinrock actually approached AT&T and asked if they would be interested in implementing such a system. However, in the early sixties, most of the demand was still in analog communications. AT&T representatives told Kleinrock to just use the regular phone lines to send his digital communications, but that made no economic sense either.

“It takes you 35 seconds to dial up a call. You charge me for a minimum of 3 minutes, and I want to send a hundredth-of-a-second of data,” said Kleinrock.

It would take the weight of the US government to resolve this impasse and command such a network into existence. In the late 1960s, shaken after the Soviet Union’s success in launching Sputnik into orbit, the United States began investing heavily in new research and development. It created ARPA, the Advanced Research Projects Agency, which funded various research labs across the country.

Robert Taylor, tasked with monitoring the programs’ progress from the Pentagon, had three dedicated teletype terminals for each of the ARPA-funded programs. At a time when computers cost anywhere from $500,000 to several million dollars, seeing three sitting side-by-side seemed like a tremendous waste of money.

“Once you saw that there were these three different terminals to these three distinct places the obvious question that would come to anyone's mind [was]: why don't we just have a network such that we have one terminal and we can go anywhere we want?” asked Taylor.

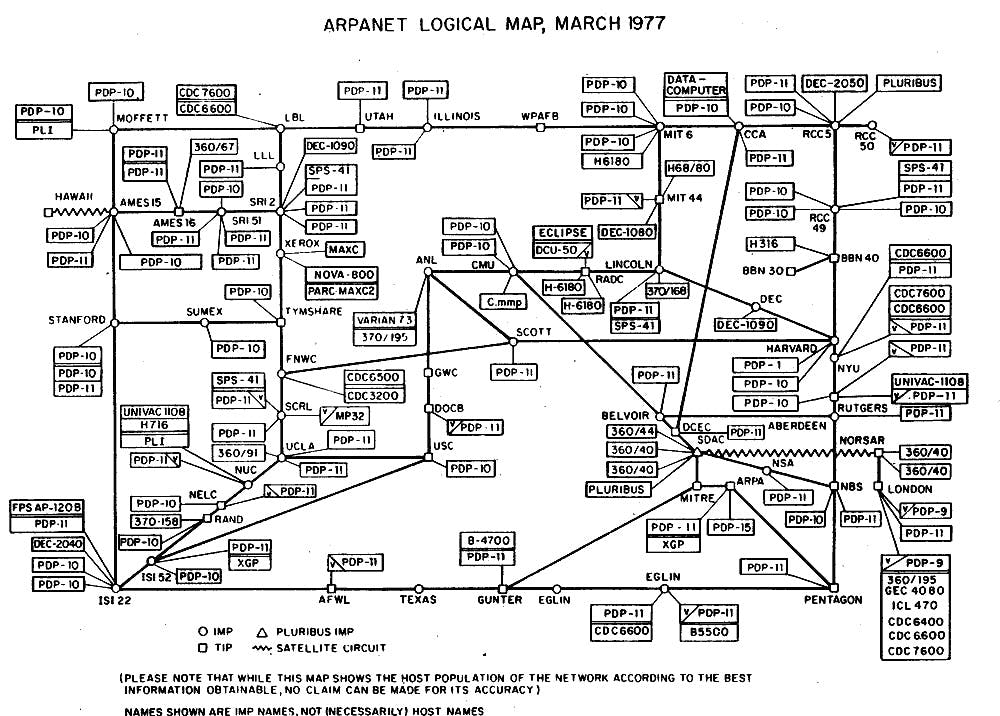

This was an application that packet-switching was designed to accommodate, and Taylor, familiar with Kleinrock’s work, commissioned an electronics company to build the kinds of packet-switchers Kleinrock had envisioned. Those packet switchers were called interface message processors, or IMPs. The first two IMPs were connected to mainframes at UCLA and Stanford Research Institute, using the telephone service between them as the communications backbone. On October 29, 1969, the first message between UCLA and SRI was sent, and ARPANET was born.

From then on, ARPANET grew at a rapid pace. By 1973, there were already 40 computers connected to IMPs across the country. It was clear that the network would only grow faster from there, and a more robust packet-switching protocol would need to be developed. ARPANET’s protocol had a couple of properties that prevented it from scaling easily. It didn’t have great ways to deal with packets arriving out of order, didn’t have a great means of flow control, and didn’t have an optimized addressing system.

Source: Computer History Museum

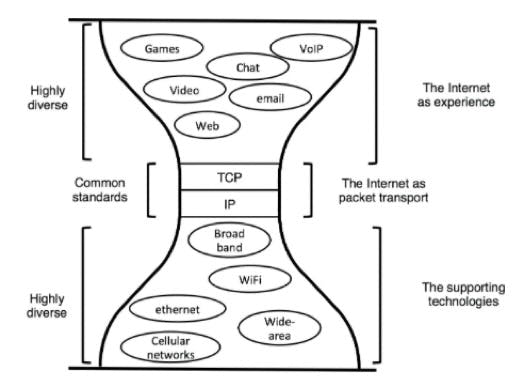

Thus, by 1974, researchers Vinton Cerf and Robert Khan came out with “A Protocol for Packet Network Intercommunication.” In it, they outlined the ideas that would eventually become Transmission Control Protocol (TCP) and Internet Protocol (IP) — the two fundamental standards of the Internet as we know it today. The core idea enabling both, however, was wrapping the packets in a little envelope called a “datagram.” That envelope would be a little header at the front of the packet that included the address it was going to, along with a number of helpful bits of info.

In Cerf and Khan’s conception, the TCP protocol would be run on the end-nodes of the network. It would do everything from breaking messages into packets, putting the packets into datagrams, ordering the packets correctly at the receiver’s end, and performing error correction.

Packets would then be routed through the network through IP, which ran on all the routers directing packets in the network. IP looked only at the destination of the packet while being entirely blind to the contents it was transmitting.

These protocols were trialed on a number of nodes within the ARPANET, and the standards for TCP and IP were officially published in 1981. What was exceedingly clever about this suite of protocols was its generality. TCP and IP did not care which carrier technology transmitted its packets, whether it be copper wire, fiber optic cable, or radio. It also imposed no constraints on how transmitting these bits could be implemented at the application layer.

Source: David D. Clark, Designing an Internet

This gave a lot of freedom to the system and injected a ton of potential into it. Any use case that could be thought of could be built and distributed to any machine with an IP address in the network. However, even then, it was difficult to foresee just how massive the Internet would one day become.

David Clark, one of the architects of the original Internet, wrote in 1978 that “we should … prepare for the day when there are more than 256 networks in the Internet.” He now looks upon that comment with some humor. Many assumptions about the nature of computer networking changed from that time until now, primarily the explosion in the number of personal computers. Today, billions of individual devices are connected across hundreds of thousands of smaller networks. Remarkably, they all still do so using IP.

Though ARPANET was decommissioned in 1986, the rest of the connected computers kept going. Residences with personal computers used dial-up to get email access, and following 1989, a new virtual knowledge base was invented with the World Wide Web.

New infrastructure, consisting of web servers, emerged to ensure the Web was always available to users, while programs like web browsers allowed end nodes to view the information and web pages stored in the servers.

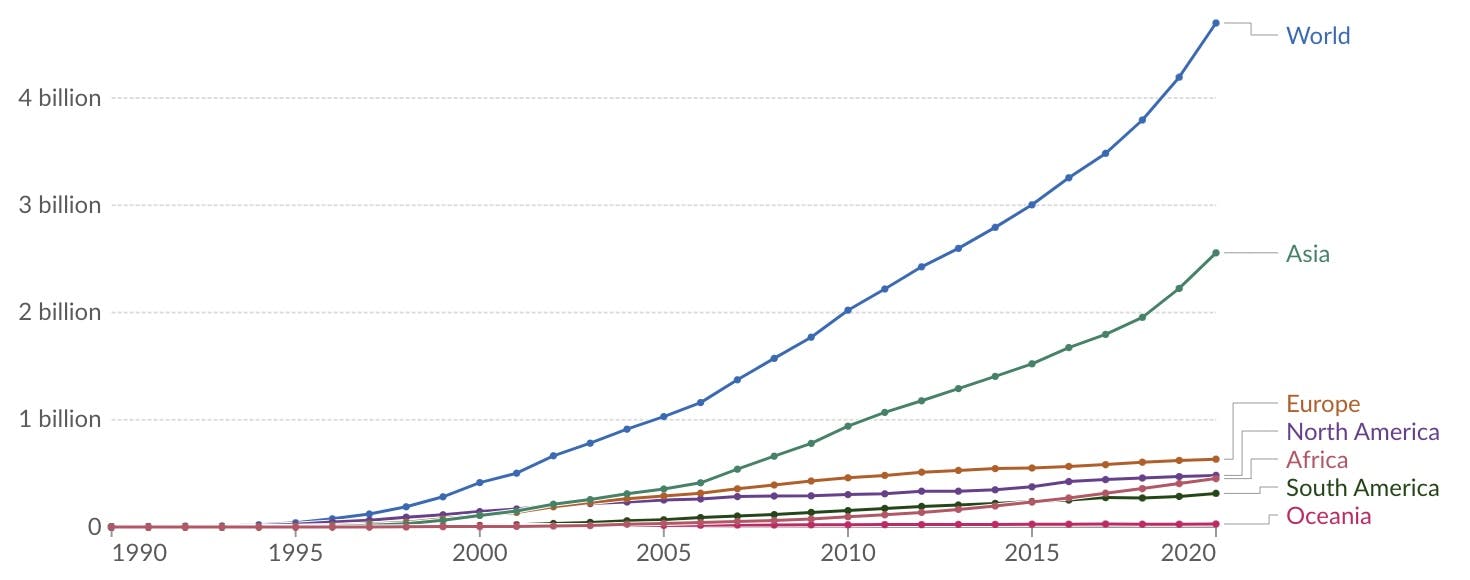

Source: Our World In Data

As the number of connected people increased in hockey-stick fashion, carriers finally began realizing that dial-up —converting digital to analog signals — was not going to cut it anymore. They would need to rebuild the physical connectivity layer by making it digital-first.

The single biggest development that would alter the face of the Internet forever was the mass installment of fiber optic cable throughout the 1990s. Fiber optics use photons traveling through thin glass to increase the speed of information flow. The fastest connection possible with copper wire was about 45 mbps, or 45 million bits per second. Fiber optics allowed that connection to become over two thousand times faster. Residences can now hook into a fiber optic connection that can deliver them 100 gbps — 100 billion bits per second.

While fiber was initially laid down by telecom companies that used it to offer high-quality cable television service to homes, the same lines would be used to provide Internet access to households. These service speeds were so fast, however, that a whole new category of behavior became possible online. Information was moving fast enough to make applications like video calling or video streaming a reality.

The connection was so good that, in time, video no longer had to go through the cable company’s digital link to your television, it could be transmitted through those same IP packets and viewed with the same experience on your computer.

YouTube debuted in 2004, while Netflix started streaming in 2007. The data consumption of households skyrocketed. Streaming a film or a movie requires about 1 to 3 gigabytes of data per hour. Whereas in 2013, the median household consumed 20-60 gigabytes of data per month, today that number falls somewhere about 587 gigabytes.

All said, while it may have been the government and small research groups that kickstarted the birth of the Internet, its evolution henceforth was dictated by market forces; including service providers that offered cheaper than ever communication channels and users that primarily wanted to use those channels for entertainment.

A New Kind of Internet

If the Internet imagined by Vinton Cerf and Robert Kahn was a distributed network of routers and endpoints that shared data in a peer-to-peer fashion, the Internet of our day is a wildly different beast.

The biggest reason for this is that the Internet is not really used for back-and-forth networking and communications. The vast majority of users treat it as a high-speed channel for content delivery.

Netflix now makes up for 15% of all Internet traffic — worldwide. YouTube makes up 11%. As of 2022, video streaming makes up nearly 58% of all Internet traffic.

This even shows up in Internet service provision statistics. Way more capacity is granted for downlink to end-nodes than for uplink. Typical cable speeds for downlink might reach over 1000 mbps, but only about 35 mbps are granted for uplink. It’s not really a two-way street anymore.

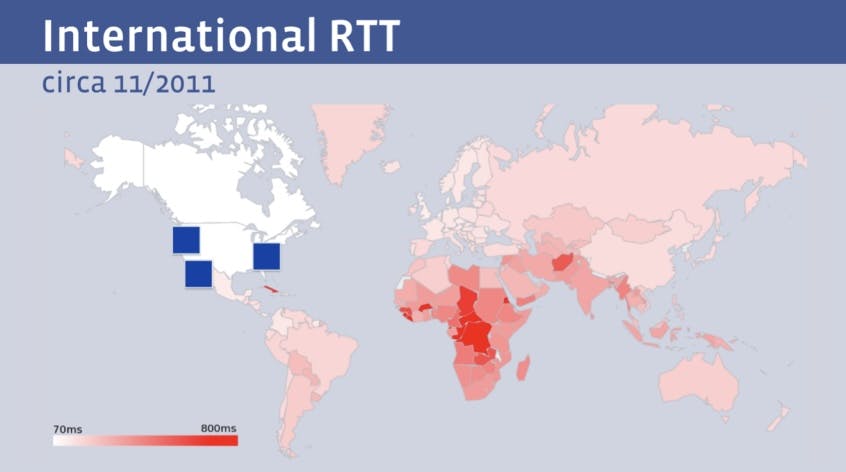

Even though the downlink speeds enabled by fiber were blazingly fast, the laws of physics still imposed some harsh realities for global Internet companies with servers headquartered in America. The image below shows the “round-trip time” for various global users to connect to Facebook.

Source: Geoff Huston

Facebook users in Asia or Africa had a completely different experience because their connection to a Facebook server had to travel halfway around the globe while users in the US or Canada could enjoy nearly instantaneous service. To combat this, larger companies like Google, Facebook, Netflix, and others began storing their content physically closer to users through CDNs, or “content delivery networks.”

These hubs would store caches of the websites’ data such that global users wouldn’t need to ping Facebook’s main servers, they could merely interact with the CDNs. The largest applications realized that in fact, they could go even further than this. If their client base was global, they had an economic incentive to create a global service infrastructure too. Rather than simply owning the CDNs that host your data, why not own the literal fiber cable that connects servers from America to the rest of the world?

Throughout the beginning of the 2020s, the largest Internet companies have done just that. Most of the world’s submarine cable capacity is now either partially or entirely owned by a FAANG company. Below is a map of some of the sub-sea cables that Facebook played a part in financing.

Source: Telegeography

The cable systems these companies are laying down are increasingly impressive. Google, which is the sole owner of a number of sub-sea cables across the Pacific and Atlantic can deliver hundreds of terabits per second through its infrastructure.

In other words, these applications became so popular, that they effectively have the need to move outside of the traditional Internet infrastructure and instead operate their services within their own, entirely contained private networks. Networks that handle not only the physical layer but also create new transfer protocols — totally disconnected from IP or TCP — which handle data transfer within their own digital fiefdoms.

This kind of verticalization around an enclosed network has offered a number of benefits for such companies. If IP poses security risks that are inconvenient for these companies to deal with, they can just stop using IP. If the nature by which TCP delivers data to the end-nodes is insufficiently efficient for the company’s purposes, they can use create their own protocols to do it better.

On the other hand, the fracturing of the Internet from a common digital space to a tapestry of private networks raises some important questions regarding the future of the Internet as a public good.

For instance, as provision becomes more privatized — it is difficult to answer whose shoulders the responsibility of providing access to the Internet as a ‘human right’ will fall on. A number of other issues plague a common network without a dedicated and resourceful steward.

The World Wide Web has become the de facto record of recent society’s activities. However, there is no one with the dedicated role of helping maintain and preserve these records. Already, the problem known as link rot is beginning to affect everyone from the Harvard Law Review, where according to Jonathan Zittrain, three quarters of all links cited no longer function, and even the New York Times, where roughly half of all articles contain at least one rotted link.

The consolation is that the story of the Internet is nowhere near over. It is a dynamic and constantly evolving structure. It could be that just as high-speed fiberoptics reshaped how we use the Internet, forthcoming technologies may have a similarly transformative effect on the structure of our networks. SpaceX’s Starlink is already unlocking a completely new way of providing service to millions.

Its data packets, which travel to users via radio waves from Low Earth Orbit, may soon be one of the fastest and most economical ways of delivering Internet access to a majority of users on Earth. After all, the distance from LEO to the surface of the Earth is just a fraction of the length of subsea cables across the Atlantic and Pacific oceans. Astranis, another satellite Internet service provider, that parks its small sats in geostationary orbit, may deliver a similarly game-changing service for many. Internet from space is a revolutionary idea, that could one day become a kind of common global provider. We will need to wait and see what kind of opportunities a sea change like this may unlock.

Still, it is undeniable that what was once a unified network has over time fractured into smaller spaces, governed independently of the whole. If the initial problems of networking concerned resolving the technical aspects of making digital communications feasible, the present and future considerations will center on the social aspects of a network, provided by private entities, used by private entities, but relied on by the public.

Disclosure: Nothing presented within this article is intended to constitute legal, business, investment or tax advice, and under no circumstances should any information provided herein be used or considered as an offer to sell or a solicitation of an offer to buy an interest in any investment fund managed by Contrary LLC (“Contrary”) nor does such information constitute an offer to provide investment advisory services. Information provided reflects Contrary’s views as of a time, whereby such views are subject to change at any point and Contrary shall not be obligated to provide notice of any change. Companies mentioned in this article may be a representative sample of portfolio companies in which Contrary has invested in which the author believes such companies fit the objective criteria stated in commentary, which do not reflect all investments made by Contrary. No assumptions should be made that investments listed above were or will be profitable. Due to various risks and uncertainties, actual events, results or the actual experience may differ materially from those reflected or contemplated in these statements. Nothing contained in this article may be relied upon as a guarantee or assurance as to the future success of any particular company. Past performance is not indicative of future results. A list of investments made by Contrary (excluding investments for which the issuer has not provided permission for Contrary to disclose publicly, Fund of Fund investments and investments in which total invested capital is no more than $50,000) is available at www.contrary.com/investments.

Certain information contained in here has been obtained from third-party sources, including from portfolio companies of funds managed by Contrary. While taken from sources believed to be reliable, Contrary has not independently verified such information and makes no representations about the enduring accuracy of the information or its appropriateness for a given situation. Charts and graphs provided within are for informational purposes solely and should not be relied upon when making any investment decision. Please see www.contrary.com/legal for additional important information.